UPDATE (2020): If you search for the history of graphic lambda calculus and it’s relations with chemlambda then please use:

- Graph rewrites, from emergent algebras to chemlambda or arXiv:2007.10288 [cs.LO] or (pdf) or (figshare)

For more information Chemlambda page is the place to go, or arXiv:2003.14332 [cs.AI].

If you look only for graphic lambda calculus then see

(journal) (arxiv) – Graphic lambda calculus. Complex Systems 22, 4 (2013), 311-360

UPDATE (2020): The chemlambda collection of animations, salvaged from G+, is now available in an enhanced form. Instructive, with lots of links.

UPDATE (2019): There are three recent projects which continue after GLC. You may be most interested in the lambda calculus to chemlambda parser, mainly because you can play with it exclusively in javascript. I gradually add much more info than previously.

UPDATE (2019): You can explore graphs and reductions in chemlambda and in Interaction Combinators at this page. If you want to use this blog for learning more about graphic lambda calculus and chemlambda, then you should read the more recent explanatory posts, starting with this one.

UPDATE (2017): Graphic lambda calculus (GLC) and chemlambda are two different formalisms which were developed in parallel. In my opinion GLC is by far bested by chemlambda and also the main interest in chemlambda comes from molecular computers, not decentralized computing.

In GLC_computations_examples are collected some constructions with GLC which are earlier than chemlambda.

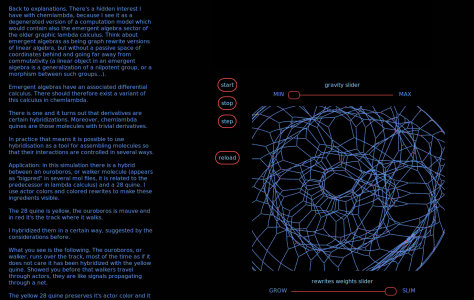

This is the logo of chemlambda:

UPDATE 06.11.2016: For the moment the best entry point to this universe is the README from the active branch of the chemlambda repository. As for some important ideas to take home, these are:

- The whole algorithm, with it’s various parts, is the model, not only the rewrite system.

- The model has chemlambda as a proof of concept.

- Go as much as possible without global semantics and control

- Everything is local and decentralized.

- Lambda calculus is only a tiny part, serving only as inspiration for more interesting ideas, like for example understanding the predecessor and turning it into the first artificial life organism in chemlambda, later to give the notion of a chemlambda quine

- Space is not at all understood in other approaches. Evidence of a thing is not the same as a thing.

- Space is a plug-in, of the same nature as the rest of the model.

- Nature is the fastest computer.

_______________________________________

This is a tutorial for graphic lambda calculus. Up to now the best exposition is arXiv:1305.5786 but there are some facts not covered there.

See also the related chemlambda aka “chemical concrete machine” tutorial.

Visit the chemlambda demos page to SEE how this works.

Read the vision page to understand what is good for.

FAQ: chemlambda in real and virtual worlds

A decentralized computing model is described in the Distributed GLC tutorial.

Sources:

- M. Buliga, Graphic lambda calculus, Complex Systems 22, 4 (2013), 311-360, arXiv:1305.5786

- M. Buliga, L.H. Kauffman, Chemlambda, universality and self-multiplication, arXiv:1403.8046 , accepted in the

ALIFE 14: The Fourteenth International Conference on the Synthesis and Simulation of Living Systems, July 30th – Aug 2nd 2014, New York - The tag graphic lambda calculus from the chorasimilarity blog

- The page “Emergent algebras / Computing with space / Graphical calculi“

- M. Buliga, L.H. Kauffman, GLC actors, artificial chemical connectomes, topological issues and knots, arXiv:1312.4333

- Distributed GLC project.

——————————————

What is graphic lambda calculus?

Graphic lambda calculus is a formalism working with a set of oriented, locally planar, trivalent graphs, with decorated nodes (and also wires, loops and a termination

gate node). The set is described in the Introduction to graphic lambda calculus.

There are moves acting on such graphs, which can be local or global moves.

Graphic lambda calculus contains differential calculus in metric spaces, untyped (or simply typed) lambda calculus and that part of knot theory which can be expressed by using knot diagrams.

——————————————

The set GRAPH.

This is the set of graphs which are subjected to the moves. Any assembly of the following elementary graphs, called “gates” is a graph in .

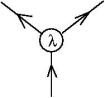

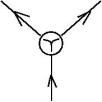

- The

graph, which corresponds to the lambda abstraction operation from lambda calculus, see lambda terms. It is this:

But wait! This gate looks like it has one input (the entry arrow) and two outputs (the left and right exit arrows respectively). This could not be a graph representing an operation, because an operation has two inputs and one output. For example, the lambda abstraction operation takes as inputs a variable name and

a term and outputs the term

.

Remember that the graphic lambda calculus does not have variable names. There is a certain algorithm which transforms a lambda term into a graph in , such that to any lambda abstraction which appears in the term corresponds a

gate. The algorithm starts with the representation of the lambda abstraction operation as a node with two inputs and one output, namely as an elementary gate which looks like the

gate, but the orientation of the left exit arrow is inverse than the one of the

gate. At some point in the algorithm the orientation is reversed and we get

gates as shown here. There is a reason for this, wait and see.

It is cool though that this gate looks like it takes a term

as input and it outputs at the left exit arrow the variable name

and at the right exit arrow the term

. (It does not do this, properly, because there will be no variable names in the formalism, but it’s still cool.)

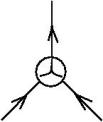

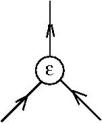

- The

graph, which corresponds to the application operation from lambda calculus, see lambda terms. It is this:

This looks like the graph of an operation, there are no clever tricks involved. The sign I use is like a curly join sign.

- The

graph, which will be used as a FAN-OUT gate, it is:

- The

graph. For any element

of an abelian group

(think about

as being

or

) there is an “exploration gate”, or “dilation gate”, which looks like the graph of an operation:

(Therefore we have a family of operations, called “dilations”, indexed by the elements of an abelian group. This is a structure coming from emergent algebras.)

We use these elementary graphs for constructing the graphs in . Any assembly of these gates, in any number, which respects the orientation of arrows, is in

.

Remark that we obtain trivalent graphs, with decorated nodes, each node having a cyclical order of his arrows (hence locally planar graphs).

There is a small thing to mention though: we may have arrows which input or output into nothing. Indeed, in particular the elementary graphs or gates are in and all the arrows of an elementary graph either input or output to nothing.

Technically, we may imagine that we complete a graph in , if necessary, with univalent nodes, called “leaves” (they may be be decorated with “INPUT” or “OUTPUT”, depending on the orientation of the arrow where they sit onto).

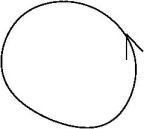

- For this reason we admit into

arrows without nodes which are elementary graphs, called wires

and loops (without nodes from the elementary graphs, nor leaves)

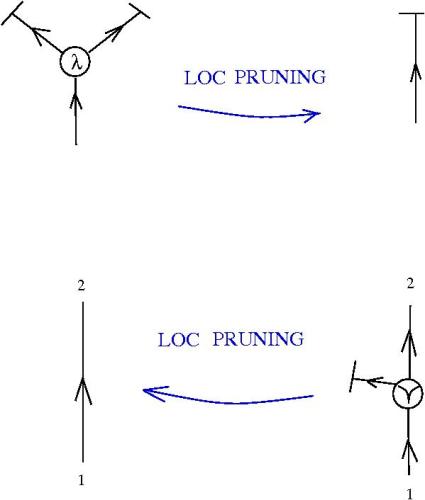

- Finally, we introduce an univalent gate, the termination gate:

The termination gate has an input leaf and no output.

and now, any graph which is a reunion of lines, loops and assemblies of the elementary graphs (termination graph included) is in .

——————————————

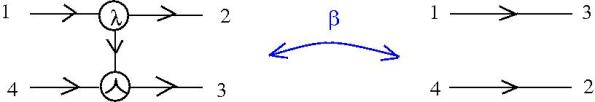

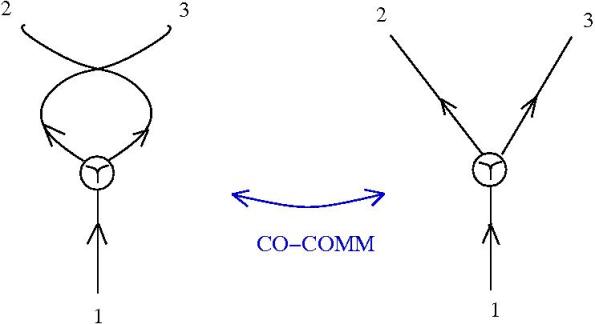

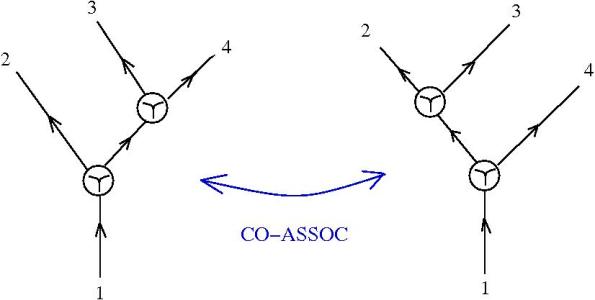

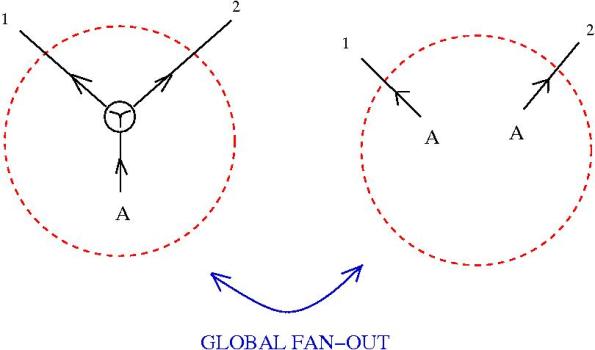

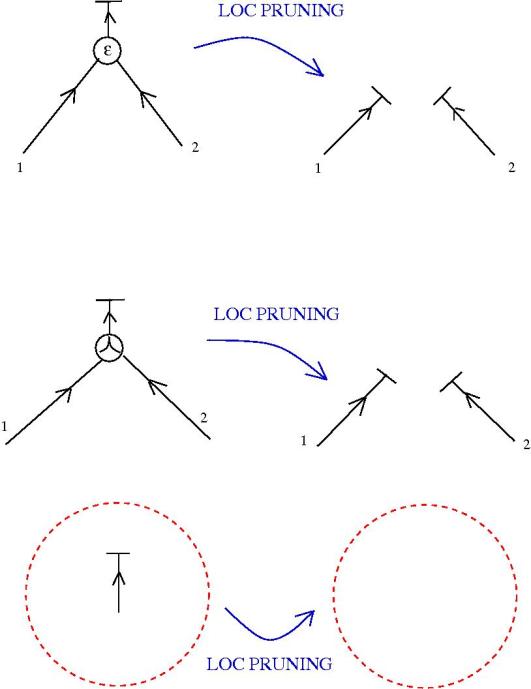

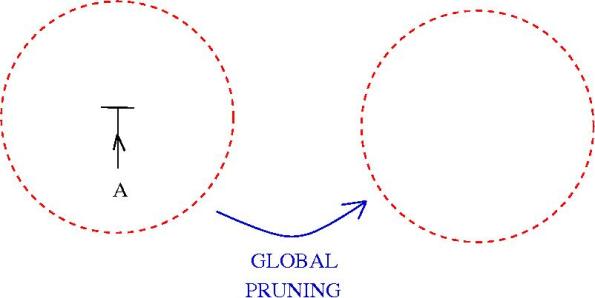

The moves.

Still in beta version:

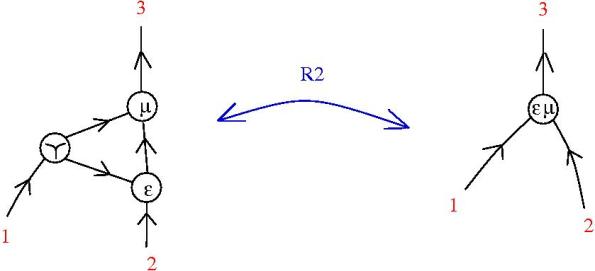

- The extended beta move, introduced here.

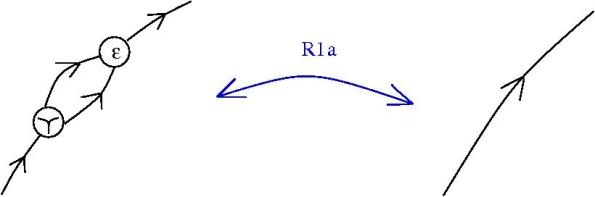

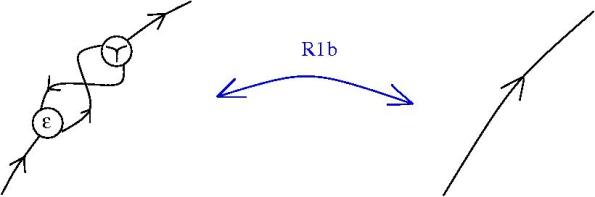

- The generating moves, discussed here, here and here.

Yet more:

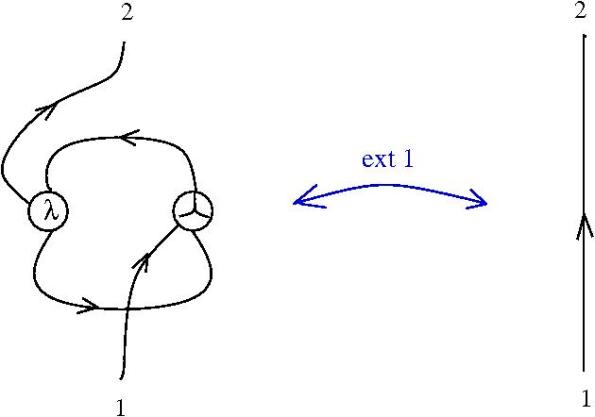

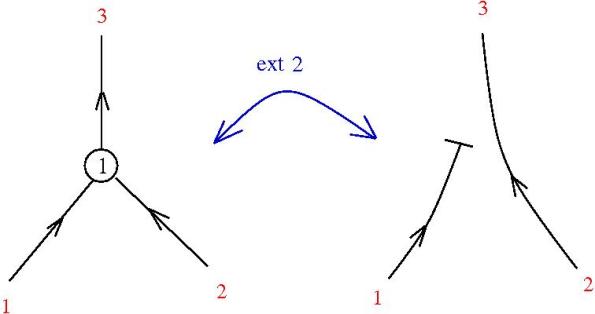

- The ext1 move, if you need extensionality.

If there is no oriented path from “2” to “1” outside the left hand side picture then one may replace this picture by an edge. Conversely, if there is no oriented path connecting “2” with “1” then one may replace the edge with the graph from the left hand side of the following picture:

——————————————

Macros.

- Crossings in lambda calculus (graphic beta rule as braiding)

- Emergent algebra crossings

- The zipper and examples

- Packing arrows (II) and the strand networks sector

- Sets, lists and order of moves in graphic lambda calculus

——————————————

Sectors.

A sector of the graphic lambda calculus is:

- a set of graphs, defined by a local or global condition,

- a set of moves from the list of all moves available.

The name “graphic lambda calculus” comes from the fact that there it has untyped lambda calculus as a sector. In fact, there are three four important sectors of graphic lambda calculus:

- untyped lambda calculus sector, which contains all graphs in

which are obtained from untyped lambda calculus terms by the algorithm described here. The moves of this sector are: graphic beta move, fan-out moves, pruning moves. The article arxiv:1207.0332 describes this sector in detail.

- emergent algebra sector, which contain all graphs in

described in the article arxiv:1103.6007, via the emergent algebra crossing macros, and the following moves: dual graphic beta move (which forms with the graphic move the extended beta move), fan-out moves, pruning moves, emergent algebra moves. See arXiv:1305.5786 section 5 for a precise description, as well as the post Emergent algebras as combinatory logic part IV (and check out the trackbacks).

- knot and tangle diagrams sector, defined by using crossings in lambda calculus macro and the Reidemeister moves as described in the post Generating set of Reidemeister moves for graphic lambda crossings, which are composite moves obtained from the graphic beta move and some local fan-out moves. The article arxiv:1211.1604 describes this sector in detail.

- Freedom sector of graphic lambda calculus

_______________

Simply typed version of graphic lambda calculus:

- Simply typed graphic lambda calculus

- Example: decorations of S,K,I combinators in simply typed graphic lambda calculus

_______________

Where I use it (work in progress) and other useful links:

In these two posts is used the tree formalism of the emergent algebras.

- Parallel transport in spaces with dilations, I

- What group is this? (Parallel transport in spaces with dilations, II)

In order to understand how this works, see the following posts, which aim to describe finite differential calculus as a graph rewriting system:

- Dictionary from emergent algebra to graphic lambda calculus (I)

- Dictionary from emergent algebra to graphic lambda calculus (II)

- Dictionary from emergent algebra to graphic lambda calculus (III)

The chemical concrete machine project:

- A chemical concrete machine for lambda calculus

- Local FAN-IN eliminates global FAN-OUT (I)

- Local FAN-IN eliminates GLOBAL FAN-OUT (II)

- Chemical concrete machine, short list of gates and moves

- Ancient Turing machine helps Chemical concrete machine

- Spiral patterns in the full zipper graph

- Extreme functional programming done with biological computers

- Chemical concrete machine, detailed (I)

- Chemical concrete machine, detailed (II)

- Chemical concret machine, detailed (III)

- Chemical concrete machine, detailed (IV)

- Chemical concrete machine, detailed (V)

- Chemical concrete machine, detailed (VI)

The neural networks project:

The graphic lambda calculus appears to me shockingly similar to Lamping’s graphs for optimal reduction [1], and their simplifications [2]. Are you aware of such close similarities?

1. Lamping, J., 1990. An Algorithm for Optimal Lambda Calculus Reduction, in: Proceedings of the 17th ACM SIGPLAN-SIGACT Symposium on Principles of Programming Languages, POPL ’90. ACM, New York, NY, USA, pp. 16–30. doi:10.1145/96709.96711

2. Asperti, A., Guerrini, S., 1998. The Optimal Implementation of Functional Programming Languages, Cambridge tracts in theoretical computer science. Cambridge University Press.

Yes, of course. When I wrote the first GLC paper I was not aware of Wadsworth and Lamping, but starting from https://arxiv.org/pdf/1403.8046.pdf I corrected this. However, there are many important differences, some of them mentioned at the README page https://github.com/chorasimilarity/chemlambda-gui/blob/gh-pages/dynamic/README.md . First, even if we stay in GLC, not in chemlambda, the lambda node is different. Then, even if interaction graphs and GLC/chemlambda are both graph rewriting systems, GLC and later chemlambda are not at all restricted to lambda calculus. See the GLC article sections 3,4 for lambda calculus and then see the following sections for other stuff which does not exists elsewhere. Btw this artificial restriction to lambda calculus is actually almost incomprehensible to me, as a mathematician, along with the careless definition of the graph classes (like for example in the usual treatment when there is no word about the fact that even if you start and sometimes end with a graph which can be canonically associated with a lambda term, the intermediary steps in the computation are in no way bounded to be graphs associated to lambda terms). A third distinction is that chemlambda has no need for anything which is not local, like croissants. A forth distinction is that chemlambda is a (or several) graph rewriting system(s) together with algorithms for applying the rewrites, so despite the fact that some rewrites, like the famous Wadsworth-Lamping beta, are the same, the accent is on the rewrites plus the application algorithm. In chemlambda we work at the graphical level directly, for graphs not decorated with lambda terms, not at the lambda calculus level.

Thank you for your very prompt and detailed reply. I have objections for three of the four differences you pointed out.

2. Indeed, there is no such restriction to the lambda-calculus. Lamping graphs and interaction nets allow further generalisation. They can be (and have been) used to represent many other different systems, such as cut-elimination of formal logics, reduction in process algebras.

3. Interaction nets and sharing graphs do not include non-local features. Croissants, for instance, are nodes, and the rewriting rules they are involved in always involve only one other node.

4. I do not get the point of such difference. Also the study of sharing graphs and interaction nets sometimes need to focus on what you call the application algorithm (the community of rewriting theory call it the reduction strategy).

BTW, Wadsworth technique is quite unrelated to Lamping one, and you should confuse them together.

The previous reply maybe too long. It would be a good exercise to really compare these things. It might go like this: you pick the system you think is closest to chemlambda and we try to answer to the questions: 1) which is the class of graphs? 2) which are the rewrites? 3) which are the algorithms of application of the rewrites? 4) as concerns lambda calculus, which one to pick? (I prefer pure untyped lambda beta, without eta) 5) define the function lambda2graphs, compare for some commonly agreed lambda terms, remark that lambda2graphs is/is not surjective. 6) define the function graphs2lambda, remark that is/is not defined everywhere and lambda2graphs and graphs2lambda are not one the inverse of the other 7) the intermediary graphs in a computaton are/are not in the image of lambda2graphs, is the model actually formulated at the level of lambda terms or at the level of graphs? does the algorithm of rewrite applications work for any initial graphs? what are interesting subjects of research? 8) what does the model of computation says about graphs which are not in the image of lambda2graphs?

I shall try to answer some of your questions. Since there a couple of decades of scientific literature about interaction nets and sharing graphs, I think you should probably already had answered them, at least to some extent, in order to relate your work to the previous ones.

1,2, 3. Essentially the same.

4. Pure lambda-calculus with beta is the one.

5, 6. You are right. That is true also for interaction nets or sharing graphs.

7a. At the level of graphs.

7b. Yes. It should be pointed out that if one start from an arbitrary graph, which may not meaningful (as a lambda-term or more generally), makes almost impossible to say that the rewriting “works”.

7c. Too broad to be discussed here.

8. What do you mean? Can you elaborate more this point, please?

Just seen your second comment. Thank you for them! I had thousand of hours of discussions on this subject, some of them too vague for my taste:) Re:

2. Cut elimination is not really different, process algebras are indeed far more interesting. However, while all these can be seen as graph rewrite systems, how much can one go with purely local notions? For example whenever when you take a closure of a relation to make an equivalence, say, that’s a global notion. That’s why I choose each time to not go in that direstion, not that it’s uninteresting, is just overstudied.

Then, process algebras are complex beasts, let’s stay at the level of trivalent, maybe 2- and 1-valent graphs (say oriented fatgraphs/ribbon graphs with colored nodes), with no particular global embeding in the plane (no global space, time, simultaneity or other tricks like this introduced by the backdoor). Say I want to study a class of graphs which don’t have any root node, can I? Or with any number of root nodes, etc. or those who are not particularily coming from a syntax tree which is further fleshed up. For example those I call quines, i.e. graphs with the property that wrt to the deterministic algorithm of applying all possible rewrites (with a priority order to eliminate conflicts) have a periodic evolution.

3. Certainly this is not completely true, because brackets and croissants are all about contexts which are nonlocal, maybe unless if you decorate the edges of the graph (according with local rules, agred), in which case after any rewrite you have to do a global decoration again. Here chemlambda does not allow to decorate the edges (although it may be useful sometimes, some types systems appear only as decorations for example). The alternative is that chemlambda has two fanouts, one called FO and another FOE, which together with the fanin FI make a group which is independent from application, lambda, etc. One can easily use the same triple FI, FO, FOE with Turing machines, is just a fun exercise to do that, like here with busy beavers http://chorasimilarity.github.io/chemlambda-gui/dynamic/turingchem.html Moreover we can put all together, nodes A and L coming from lamdba calculus, with busy beavers running on multiple tapes with multiple heads

4. For me this difference is very important, because many times what is true depends on what is the algorithm (of reduction) beneath. As an example independent from what we discuss here, the asynchronous Game of Life is far less interesting than the usual synchronous version. My main reason of interest is related to the fact that (real) chemistry is, to simplify, a not too big async graph rewrite automaton, with probabilities depending on the space embeddings of the graphs. We are made by that stuff and it works without any global notion, without any need to reject (btw) graphs which cannot be globally decorated by local rules (i.e. they have no global meaning).

I mention Wadsworth and Lamping together because they have the same beta rewrite, shockingly similar 🙂

A short addition, to be clear. I highly respect the researchers and the results obtained in decades of dedicated CS research. I use this chemlambda subject as an experiment in Open Science, I do this on purpose and not because I want to neglect or disregard others. Since there is already a big amount of stuff about chemlambda which is public, I welcome any public critic or contribution, reference, citation, validation attempt, refutation, etc. The accent is on “public”. The benefits are multiple, still this is an experiment and some authority figures may feel uneasy about this. On the other side, is not easy to find the right balance between opinion and validation passing facts. There is the time factor, too. There’s also that my main interest is geometry related, not CS classical subjects, or here there is a huge class of things which geometers do know since ages. Now I know that, for example, my initial treatment of emergent algebras are related to computation exactly because they are based on an abstract nonsense which is a flavored version of the FI,FO,FOE triple mentioned before. However, instead of being an usually neglected part by most (not by the sharing graphs community of course) consisting in name management kitchen details, the computational content of emergent algebras turns out to be interesting (for me) more than lambda calculus with it’s accidental pair of application and lambda nodes. There’s lot to be said about this, not the moment here.